Home

People

Projects

Research

Publications

Surveys

Courses

Student Projects

Jobs

Downloads

User Tools

Site Tools

Sidebar

This is an old revision of the document!

Innsbruck Multi-View Hand Gestures (IMHG) Dataset

Hand gestures are one of the natural forms of communication in human-robot interaction scenarios. They can be used to delegate tasks from a human to a robot. To facilitate human-like interaction with robots, a major requirement for advancing in this direction is the availability of a hand gesture dataset for judging the performance of the proposed algorithms.

Dataset Features

- 22 participants performed 8 hand gestures in the context of human-robot interaction scenarios taking place in a close proximity.

- 8 hand gestures categorized as:

- 2 types of referencing (pointing) gestures with the ground truth location of the target pointed at,

- 2 symbolic gestures,

- 2 manipulative gestures,

- 2 interactional gestures.

- A corpus of 836 test scenarios (704 referencing gestures with ground truth, and 132 other gestures).

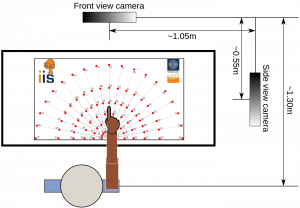

- Hand gestures recorded from two views (frontal and side) using RGB-D Kinect sensor.

- The data acquisition setup can be easily recreated using a polar coordinate pattern as shown in the figure below to add new hand gestures in the future.

- Soon to be released publicly.

- Currently available for Download with authentication.

Sample Scenarios

Gestures recorded from frontal and side view. T-B: Finger pointing, Tool pointing, Thumb up (approve), Thumb down (disapprove), Grasp open, Grasp close, Receive, Fist (stop).

Reference

Dadhichi Shukla, Ozgur Erkent, Justus Piater, The IMHG dataset: A Multi-View Hand Gesture RGB-D Dataset for Human-Robot Interaction. Towards Standardized Experiments in Human Robot Interactions, 2015 (Workshop at IROS). Extended Abstract.PDF.

BibTex

@InProceedings{Shukla-2015-StandardHRI,

title = {{The IMHG dataset: A Multi-View Hand Gesture RGB-D Dataset for Human-Robot Interaction}},

author = {Shukla, Dadhichi and Erkent, Ozgur and Piater, Justus},

booktitle = {{Towards Standardized Experiments in Human Robot Interactions}},

year = 2015,

month = 10,

note = {Workshop at IROS},

url = {https://iis.uibk.ac.at/public/papers/Shukla-2015-StandardHRI.pdf}

}

Acknowledgement

The research leading to these results has received funding from the European Community’s Seventh Framework Programme FP7/2007-2013 (Specific Programme Cooperation, Theme 3, Information and Communication Technologies) under grant agreement no. 610878, 3rd HAND.

Contact

dadhichi[dot]shukla[at]uibk[dot]ac[dot]at